One of the most talked‑about names in recent developer communities is OpenClaw.

OpenClaw is a personal AI agent that runs 24/7 on your local machines (laptops, home servers, cloud, etc.).

You can send commands through your everyday messengers such as WhatsApp, Telegram, Discord, and Slack, and OpenClaw will handle them for you. People say it feels like JARVIS, the AI assistant from the Iron Man movies.

For example, if you find it tedious to collect information needed for investment or work every day, you can use OpenClaw as a research agent.

If you configure it with “Summarize today’s top 5 AI industry news items and post them to Slack,” you will find a neatly organized news briefing in Slack every morning before you go to work.

If you have a massive report or whitepaper, you can simply say “Summarize the key points of this PDF in three lines,” and it will handle it instantly. Because of this, in a short time after launch, its GitHub stars have soared past 100,000 and it has spread rapidly, drawing intense interest as major global outlets continuously cover it.

OpenClaw is especially popular because of its local‑first execution model, which keeps your data on your own infrastructure.

This has even led to a Mac mini hoarding phenomenon in the United States.

OpenClaw offers a seamless user experience by naturally integrating with the messaging apps you already use.

Being open source, it has an architecture that allows free extension and customization.

But this is precisely where a new question arises.

“If this agent can access your company’s codebase, internal systems, and sensitive data, is the agent itself secure?”

[Structural risk of OpenClaw]

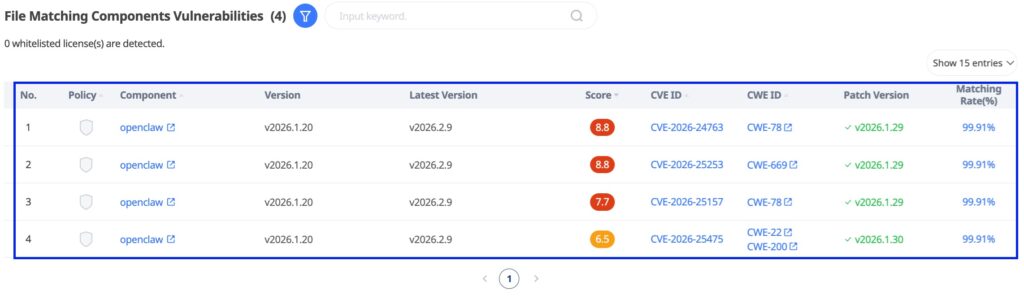

The CVE‑2026‑25253 vulnerability discovered in OpenClaw in January 2026 is not a simple bug.

An attacker can exploit this vulnerability to steal user authentication tokens and achieve remote code execution (RCE) with just a single click.

This case shows that when open‑source software with powerful privileges and a broad integration surface like an AI agent is incorporated into the software supply chain, a single weak link can expand into a risk for the entire system.

AI accelerates innovation. But at the same speed, the complexity of software supply chain security is also increasing.

[One “weak link” can break everything]

The technical explanation of this vulnerability can be complex. However, from a supply chain perspective, the structure is simple.

1️⃣ External input was not sufficiently validated.

2️⃣ An auto‑connect integration path was designed.

3️⃣ Authentication data traveled along that flow.

What was the result?

The attacker stole user authentication information, changed security settings with administrative privileges, and ultimately was able to execute commands on the PC.

No matter how strong your security features are, a single small design decision at the architecture phase can neutralize the entire system.

[The misconception that “it’s safe because it runs locally”]

Many organizations think this way. “Isn’t it safer because it runs locally rather than as SaaS?”

This incident shows that is not the case.

At the moment when the user’s browser, the user’s click, and the software’s automated behavior are connected, the local environment can also become an attack path.

The real issue is not “Is it exposed to the internet?” but “On what trust assumptions is the software designed?”

[Is open source safe?]

Many companies say, “Isn’t open source safe because the code is public?” That is partially true. But being public and actually being reviewed are two different things. In reality, many open‑source projects do not have dedicated security staff. Regular, systematic security audits often do not happen. Even after a vulnerability is disclosed, it can take weeks to months before patches are applied.

And 70–90% of modern applications are composed of open‑source dependencies; for AI systems, this ratio is even higher.

In other words, we are not using a single product; we are incorporating hundreds of external code components into our software supply chain. The OpenClaw incident shows that if even one of those links is weak, the entire chain can collapse.

[Why AI systems are particularly more dangerous]

AI agents are different from traditional software.

1️⃣ They demand broad privileges, requiring high‑level system permissions to enable automation.

2️⃣ Their integration scope is wide, connecting to messengers, APIs, file systems, and external services.

3️⃣ They are designed on trust‑by‑default assumptions, built to “help” user requests and therefore often fragile against malicious inputs.

4️⃣ Their behavior is dynamic, making it hard to predict risks through static code analysis alone.

In short, adopting AI is not merely a functional extension; it is also an expansion of software supply chain risk.

“Four principles of software supply chain security”

The OpenClaw case poses a clear set of questions.

- Do we know what we are using? (Visibility)

Do you maintain an SBOM (Software Bill of Materials) to understand which open‑source components exist inside your systems? - Are we continuously monitoring?

When a new CVE is published, can your organization immediately determine its impact? - Can we respond quickly?

Are processes for patching, token rotation, and risk assessment automated? - Are we looking at the entire supply chain?

Not just code, but builds, packages, deployment, APIs, and runtime configurations—are all stages secure?

[AI adoption is innovation, but also responsibility]

The concept of software supply chain security is no longer unfamiliar.

After the Log4Shell incident, we already experienced how a single vulnerability in one open‑source library can simultaneously threaten thousands of companies around the world. Now that threat has evolved one step further. AI agents and LLM‑based tools are emerging as a new attack surface in the software supply chain.

AI agents like OpenClaw are not mere libraries.

These tools read and write the file system, communicate with external APIs, and receive and execute commands through messaging platforms. What if a dependency library that forms part of such an agent contains a known vulnerability or hides an unknown zero-day? From an attacker’s perspective, there is no more attractive entry point.

In the AI era, competitiveness depends not on how fast you adopt AI, but on how safely you operate it.

Software supply chain security is no longer just a developer issue.

It is a core strategy of organizational risk management.

[How to use open source safely]

The essence of supply chain security is visibility, automation, and continuous governance.

New vulnerabilities will continue to be discovered.

What matters is building a system that allows us to discover and respond to vulnerabilities before they halt the business.

In the AI era, establish a foundation to leverage open‑source innovation safely. Labrador Labs is here to help.

Labrador Labs responds accurately and quickly to security threats across AI agents including OpenClaw.

Labrador Labs analyzes both source code and library‑level dependencies to immediately determine whether the vulnerability actually exists inside a customer’s codebase and whether it is reachable along execution paths.

Open‑source AI tools like OpenClaw, which rapidly spread throughout organizations, will continue to appear. And vulnerabilities will continue to follow. From the moment a tool is first introduced into your environment to every new version release and every newly registered CVE, Labrador Labs performs continuous verification without pause.

[OpenClaw Analysis Result – Labrador Labs]

AI is evolving rapidly. Threats are evolving just as quickly.

Providing safety for innovation is exactly what Labrador Labs does.

📌 Built a secure SW Supply Chain with Labrador today!

Phone: US Office +1 650-278-9253 (Mon–Fri, 9 AM–6 PM)

Email: contact@labradorlabs.ai (1:1 demo requests and pricing inquiries)

[Reference]

https://depthfirst.com/post/1-click-rce-to-steal-your-moltbot-data-and-keys

https://www.cve.org/CVERecord?id=CVE-2026-25253